Docker to Kubernetes Migration and Modernization for a Cloud-Ready Microservices Platform

To enable automated scaling, secure operations and high availability

A fast-growing SaaS company was running microservices on standalone Docker hosts. The setup worked initially, but as traffic surged the team struggled with inconsistent deployments, fragile scaling, and growing operational overhead. The company needed a structured approach for migrating from Docker to Kubernetes and modernizing its infrastructure into a resilient cloud-native platform.

To support this transformation, they partnered with Rishabh Software to execute a Docker to Kubernetes migration and modernization initiative that would automate operations, improve reliability, and support large-scale workloads.

Capability

Cloud Application Development

Industry

SaaS

Country

United States

Key Features

The organization had reached the limits of its Docker host architecture and needed to migrate Docker to Kubernetes to support reliable scaling and standardized deployments. Scaling took manual effort and every change carried some risk. We set out to build a Kubernetes-based platform that delivered six key capabilities:

High-Availability Container Orchestration

We introduced automated container orchestration. Now, the platform handles service scheduling, rolling updates, and self-healing autonomously. If infrastructure fails or updates are needed, applications keep running smoothly.

Standardized Application Deployment Workflows

We implemented controlled release processes that allow engineering teams to deploy application updates through structured and traceable workflows.

Secure Management of Application Credentials

We ensured application credentials, tokens, and certificates are securely stored and accessed through controlled policies. Credentials aren’t floating around in plain text anymore.

Consistent and Repeatable Infrastructure Environments

We established provisioning practices that create identical environments for Development, QA, and Production. This eliminates configuration drift and makes deployments predictable.

Elastic Scaling for Microservices Workloads

We enabled automatic scaling of compute resources based on real-time demand. This allows the platform to handle traffic spikes smoothly without manual intervention.

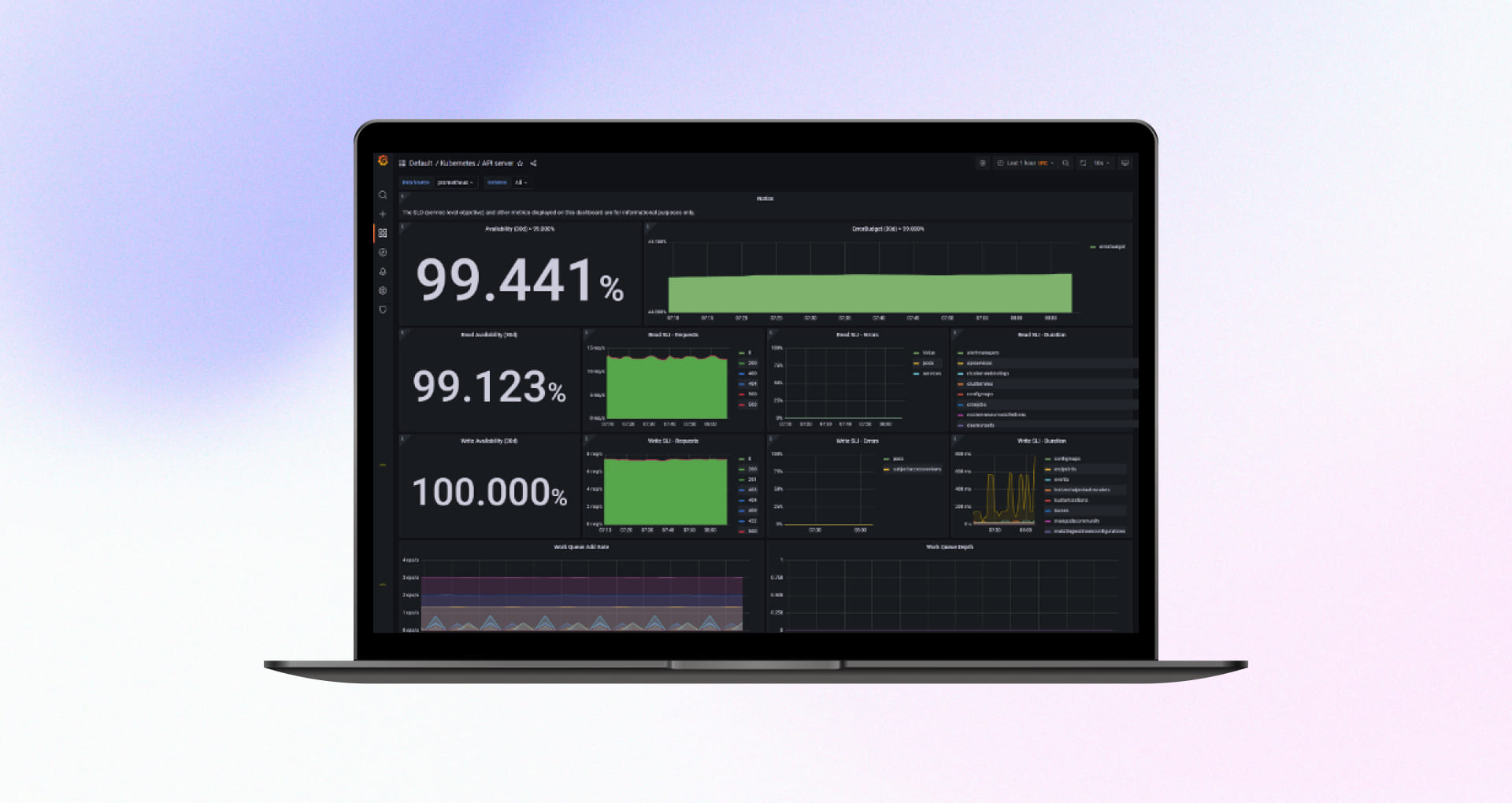

Centralized Observability and Operational Visibility

We hooked everything into one unified observability stack. Teams can view metrics, logs, and alerts in real time. This helps them detect issues early and respond before they escalate.

Challenges

On-prem Docker environments were configured manually which made it difficult to replicate environments or scale effectively.

There was no structured load testing or baseline performance data which hindered the ability to plan for peak usage.

Critical credentials were stored in weakly secured locations due to the lack of a centralized secrets management solution thereby violating compliance standards.

No automated backup or recovery processes for workloads or persistent data.

The absence of an orchestration framework led to downtime during hardware failures or high-load events.

Solutions

Our goal was to migrate from Docker to Kubernetes while building a resilient Kubernetes platform engineering foundation that combined automation, security, and reliability. Here is how we architected the solution:

Multi-AZ Kubernetes Cluster Deployment

We deployed a production-grade Kubernetes cluster across multiple availability zones. This means the app can update itself, replace broken parts, and handle traffic spikes without anyone noticing a glitch. If one node fails, another takes over immediately.

Implemented Infrastructure as Code

We used Terraform to define everything from networking and autoscaling to IAM roles and DNS, storage. Teams can now manage infrastructure with version-controlled code. It’s like having a digital blueprint that automatically builds the house exactly the same way every time.

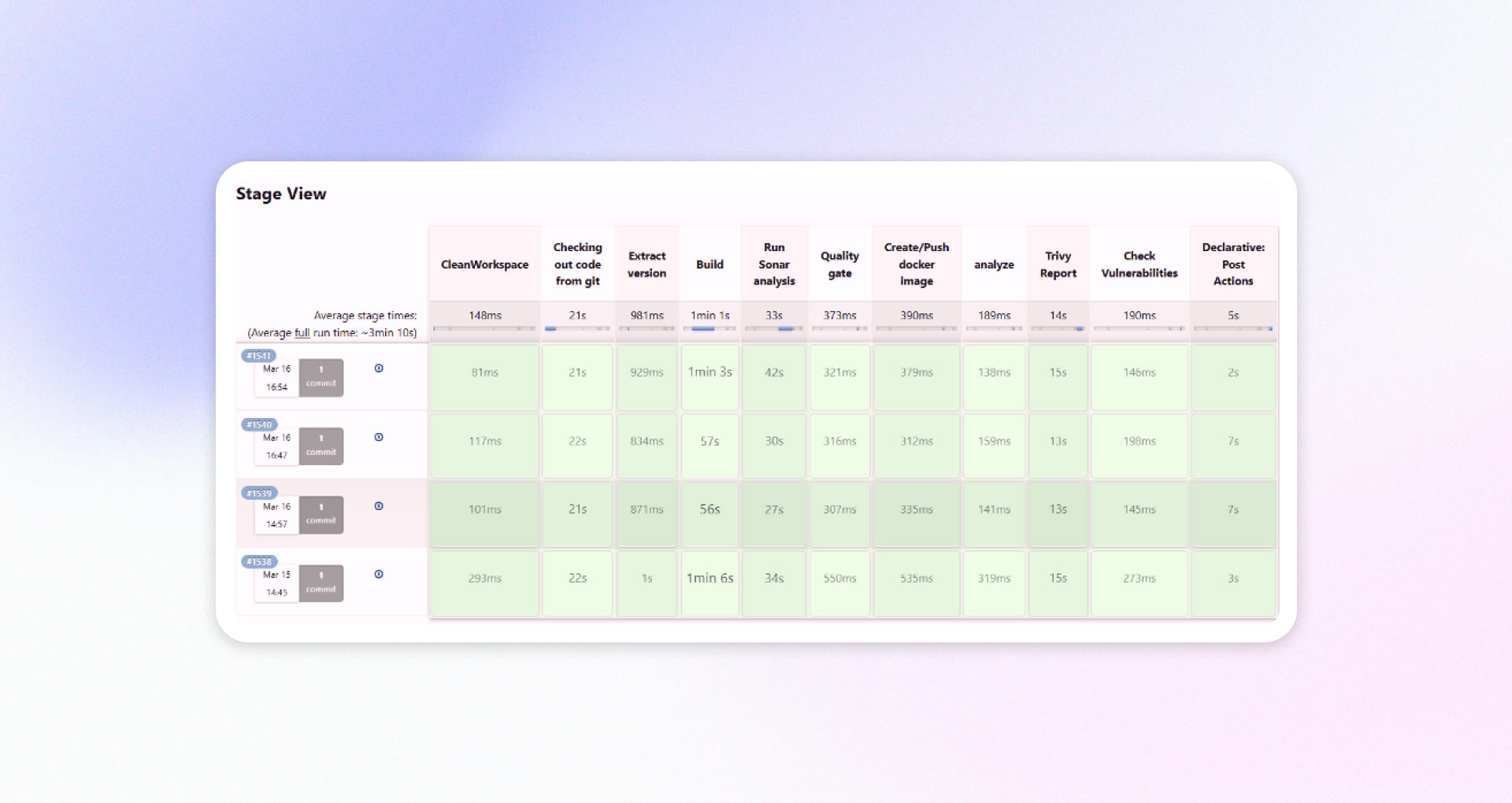

Streamlined, GitOps-Driven Delivery

To modernize how code gets to production, we integrated Flux and Jenkins. We shifted to a GitOps model where the “source of truth” for deployments lives in Git repositories. When developers are ready to release, they push code to a repository, and the system handles the rest safely and predictably.

Conducted Performance Testing and Capacity Engineering

We didn’t want to guess if the system could handle a busy day; we wanted to know for sure. We used JMeter to simulate heavy traffic loads, essentially stress-testing the platform. This helped us tune the engine so it uses just the right amount of power to scale up when busy and scale down when quiet.

Automated Backup and Disaster Recovery

We implemented AWS Backup to automatically protect storage volumes and application data. Backups are stored across regions, and we regularly test restores to ensure disaster recovery works when needed.

Full Observability and Monitoring Stack

We installed a complete monitoring dashboard (using Prometheus, Grafana, and ELK) that acts like a cockpit for the engineering team. Now, they can see exactly what’s happening inside the system in real-time and fix small hiccups before they turn into big problems.

Outcomes

Reduction in manual operations

Faster release cycles

Faster incident detection and response

Technologies Used

Project Snapshots

Recent Case Studies

Optimize your cloud infrastructure, implement robust solutions, and stay ahead of trends with our resource hub.