You are building or evaluating an AI chatbot with RAG because a standard chatbot does not meet your organization’s operational needs. An AI-powered chatbot may sound like the right solution, but without the right retrieval architecture, it does not truly understand your business. It can miss recent policy updates, fail to understand internal context, or generate responses that are not grounded in your actual data. That is why the real difference is not just the model, but the great plan behind how the system retrieves and uses knowledge across the core workflow.

The marketplace is clearly moving in this direction. The global chatbot market is projected to grow from $9.3 billion in 2025 to $32.45 billion by 2031 at a 23% CAGR. At the same time, the RAG market is expected to grow from $1.92 billion in 2025 to $10.2 billion by 2030. This is a powerful vision of where the market is heading. It is also an impactful measure of an improving curve: enterprises are beginning to map and think beyond generic AI experiences and invest in RAG-powered AI chatbots with greater speed, accuracy, and velocity.

This blog is exclusively written for the ICPs who already understand AI chatbots and now want to make them work at enterprise scale. We’ll break down where traditional chatbots fail, how RAG solves these challenges architecturally, where the strongest ROI is emerging, and how to build a RAG chatbot, and more.

Where Standard AI Chatbots Break Down at the Enterprise Level and RAG way to enhance it

Enterprise might be getting fruitful outcomes with the integration of LLM capabilities in chatbots. However, with the fast-forward tech revolution, questions about AI chatbot output and how to scale are also increasing. This is where RAG capability integration can make enterprises rely more in terms of accuracy, context awareness, real-time updates, and more. Let us examine where AI chatbots are lacking and why they need to be backed by RAG.

- Hallucination: We have heard, but still ignore it. Yes, AI-based chatbots nowadays are using the word “hallucination,” where the response given by the AI chatbot seems accurate but is wrong. It molds the information and gives it to you. In client-facing or critical workflow management, AI chatbots may hold risks.

- Stale knowledge: Enterprise data keeps changing, whether it is pricing, policies, product specifications, or compliance requirements. But a chatbot trained months ago has no idea what changed after that. So even if the response sounds right, it may already be outdated.

- Lack of proprietary context: LLMs trained on public internet data do not know your internal documents, customer history, contracts, or standard operating procedures. So if you ask a standard chatbot to help a support agent with account-specific information, it is like asking a new hire to handle a complex customer case without access to your systems.

- Compliance and auditability gaps: In industries like finance, healthcare, and legal, every chatbot response may need to be linked back to a clear and verifiable source. A standard LLM does not give that framework by default. RAG does.

With our top-tier expertise in AI/ML Development Services, we have helped enterprises address these challenges through smarter, well-grounded AI architectures. The real differentiator is not just a better LLM, but the right architecture behind it.

The Standard AI Chatbot vs. the RAG-Integrated AI Chatbot

| Capability | Standard AI Chatbot | RAG-Integrated AI Chatbot |

| Answers from | Fixed training data or scripted flows | Your live knowledge base, databases, and APIs retrieved in real time |

| Knowledge freshness | Static, degrades as your business evolves | Always current, reindexed as your data changes |

| Domain accuracy | Generic, does not know your products, policies, or terminology | Fully aligned with your business terminology, workflows, and context |

| System awareness | None, isolated from CRM, ERP, and databases | Integrated with CRM, ERP, EHR, MES, ticketing systems, and more |

| Personalization | Role-agnostic, same answer for every user | Role-aware, responses filtered by user identity and permissions |

| Hallucination control | None, generates confident but potentially incorrect answers | Grounded in retrieved sources, every answer is based on real data |

| Audit and explainability | Black box, no source tracing | Source-cited responses, traceable for compliance and quality review |

| Integration capability | Minimal, limited to basic webhook triggers | Deep integration, reads and writes across enterprise systems of record |

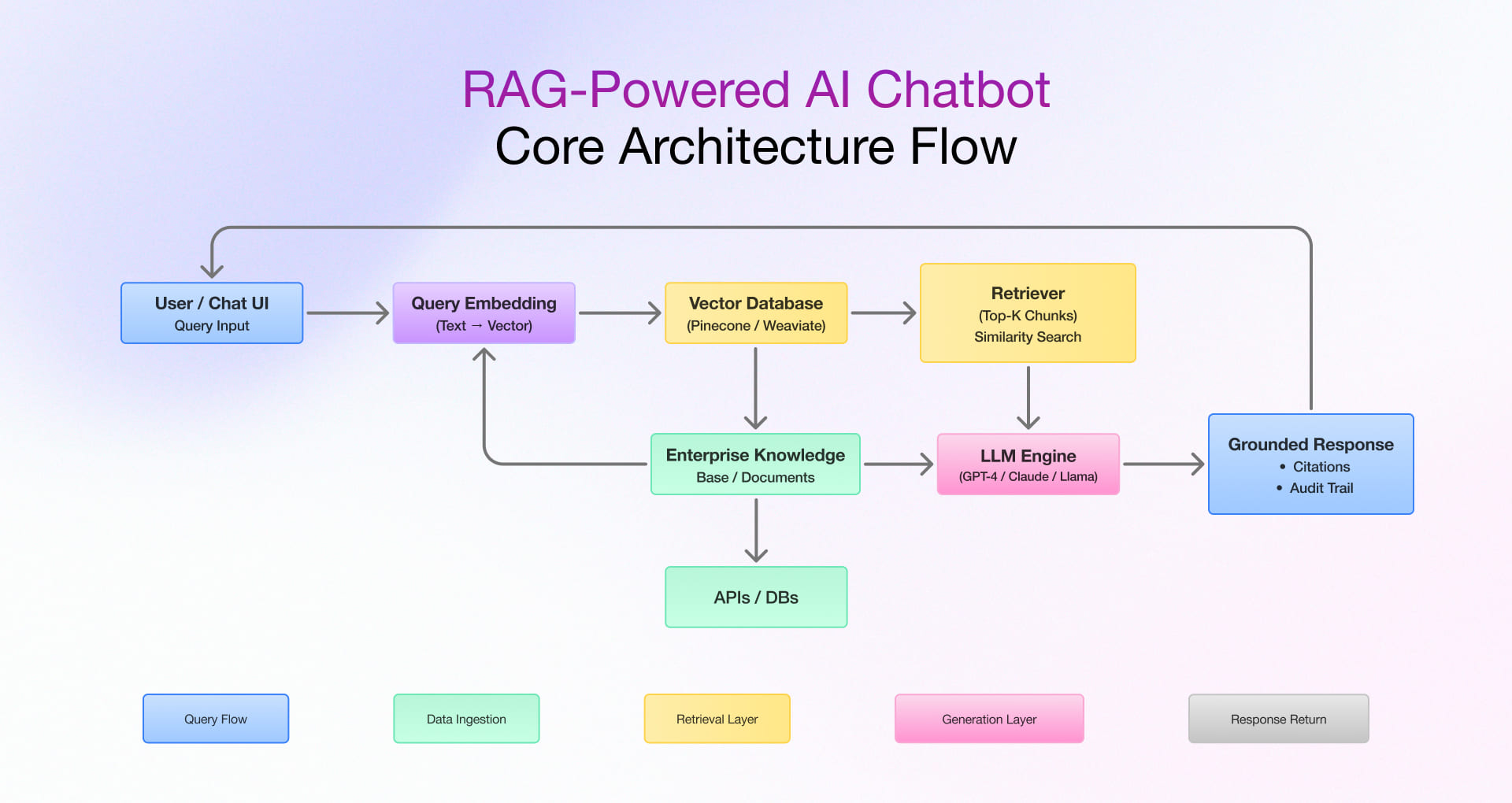

Core Architecture of a RAG-Based AI Chatbot

A RAG-based AI chatbot does not replace the LLM. It strengthens the AI chatbot by connecting the language model to a real-time retrieval system built on your proprietary data. This allows the chatbot to generate responses that are grounded in what your organization actually knows, rather than relying only on what the model learned during training. For enterprises, that means the chatbot can respond with greater relevance, accuracy, and traceability across real business interactions.

The Three-Layer RAG Pipeline

Layer 1: Knowledge Layer

This layer builds the knowledge foundation that powers the AI chatbot.

- Ingestion and Indexing: Enterprise data from PDFs, SharePoint, CRM, ERP, and knowledge bases is collected and prepared for retrieval.

- Chunking and Embedding: The content is broken into meaningful sections and converted into vector embeddings for semantic search.

- Vector Database: These embeddings are stored in a vector database so the chatbot can quickly access relevant business information.

- Continuous Updates: The knowledge base is refreshed as source data changes, keeping the chatbot aligned with the latest information.

Layer 2: Intelligence Layer

This layer helps the chatbot understand the query and retrieve the right context.

- Query Processing: The chatbot interprets the user’s question and prepares it for retrieval.

- Retrieval Engine: It searches the vector database to identify the most relevant content for the query.

- Augmentation: The retrieved information is added to the prompt so the model works with grounded business context.

- Orchestration and Access Control: Business rules, workflow logic, and permissions ensure the chatbot uses only relevant and authorized data.

Layer 3: Interaction Layer

This layer turns retrieved context into a usable chatbot response.

- LLM Response Generation: The model generates a response using both the query and the retrieved enterprise knowledge.

- Response Formatting: The answer is structured in a clear, conversational format suited to the use case.

- Citations and Auditability: Source references can be attached to improve trust, traceability, and compliance.

- User Interface and Governance: The chatbot delivers the final response through the chosen interface while maintaining security, privacy, and monitoring controls.

The diagram above illustrates how a user query travels through the embedding layer, triggers a vector similarity search against your indexed enterprise knowledge base, retrieves the most relevant document chunks, and feeds them as context into the LLM, which then returns a grounded, citable response.

Industry-Specific AI Chatbot with RAG Integration Creates the Most Value

The unavoidable value that a RAG-integrated AI chatbot delivers in your industry aligns with your specific workflows and business model, helping your business and teams operate more efficiently. Here are six core verticals where a RAG-based AI chatbot creates impact.

FinTech: Compliance-Safe Chatbots That Serve Both Customers and Analysts

Fintech organizations face a dual challenge: delivering fast, personalized customer experiences while operating under strict regulatory obligations. Standard chatbots fail at the second requirement. An AI chatbot with RAG solves both.

- Customer-facing advisory bots grounded in current product terms, interest rates, and regulatory disclosures, eliminating compliance risk from hallucinated financial guidance using a RAG-based AI chatbot approach

- Analyst-facing compliance assistants retrieving RBI, SEC, FCA, or SEBI documentation in real time, reducing regulatory research time from hours to minutes with RAG conversational AI

- Loan and credit chatbots accessing live credit policy, scoring model parameters, and underwriting guidelines, enabling faster, more consistent decisions when you build a RAG chatbot

- Fraud investigation copilots that pull transaction history, risk scores, and case notes into a single conversational interface for investigation teams

HealthTech: Clinical and Operational AI That Cannot Afford to Be Wrong

In healthcare, every AI answer carries consequences. A RAG-based AI chatbot ensures that patient-facing and clinician-facing interactions are grounded in verified, approved content.

- Patient support chatbots answering symptom, medication, and appointment queries from approved clinical content, with escalation protocols built into the conversation flow

- Clinical documentation assistants retrieving patient history, lab results, and care guidelines, reducing documentation time by up to 50 percent using AI chatbot with RAG capabilities

- HIPAA-compliant internal bots for billing, prior authorization, and insurance eligibility, connected directly to payer APIs and internal policy systems

- Staff onboarding and training bots grounded in hospital SOPs, clinical protocols, and compliance training materials

Digital Manufacturing: From Shop Floor Queries to Intelligent Operations

Manufacturing environments generate enormous operational knowledge, such as equipment manuals, maintenance logs, safety procedures, and quality checklists, most of it locked in PDFs and legacy systems. A RAG chatbot puts this knowledge in the hands of the people who need it, when they need it.

- Equipment troubleshooting bots for field technicians, retrieving technical manuals, fault histories, and repair procedures through natural language queries, reducing mean time to repair

- Production floor assistants answering shift supervisor queries against live MES and SCADA data, such as shift performance, yield rates, and downtime logs, using RAG conversational AI

- Quality and compliance chatbots surfacing inspection checklists, ISO standards, and defect history during active production runs

- Procurement and supply chain bots providing real-time inventory, vendor status, and delivery schedule information through conversational interfaces

AdTech: Faster Campaign Intelligence, Fewer Dashboard Headaches

In AdTech, decisions are made at machine speed. Campaign managers, media buyers, and data analysts need answers now, not after navigating multiple platforms. This is where a RAG-based AI chatbot becomes critical.

- Campaign performance bots querying live analytics, budget utilization, and impression data through plain-language questions, no SQL required

- Audience and segmentation assistants retrieving targeting rules, bid strategy parameters, and audience performance data on demand

- Creative review bots checking ad copy and creative assets against brand guidelines and compliance standards in real time

- Reporting assistants generating natural-language summaries of campaign performance data for client-facing and internal stakeholders using RAG conversational AI

Retail and eCommerce: Chatbots That Know Your Catalog, Your Customers, and Your Inventory

- Product discovery chatbots grounded in live catalog and inventory data, recommending only what is available and correctly priced using a RAG chatbot

- Post-purchase support bots integrated with order management, fulfillment, and returns systems, handling order status, returns, and exchanges without human escalation

- Loyalty and promotions bots accessing real-time customer account data to deliver personalized offers and program information

Step-by-Step: How to Build Your First RAG-Based AI Chatbot

Most teams start with the wrong question (“Which LLM should we use?”) and end up with the wrong system. The six steps below are structured around the three layers that actually determine whether a RAG chatbot works in production: what it knows, what it retrieves, and how it responds. Each step is a leverage point. Miss one, and the others cannot compensate.

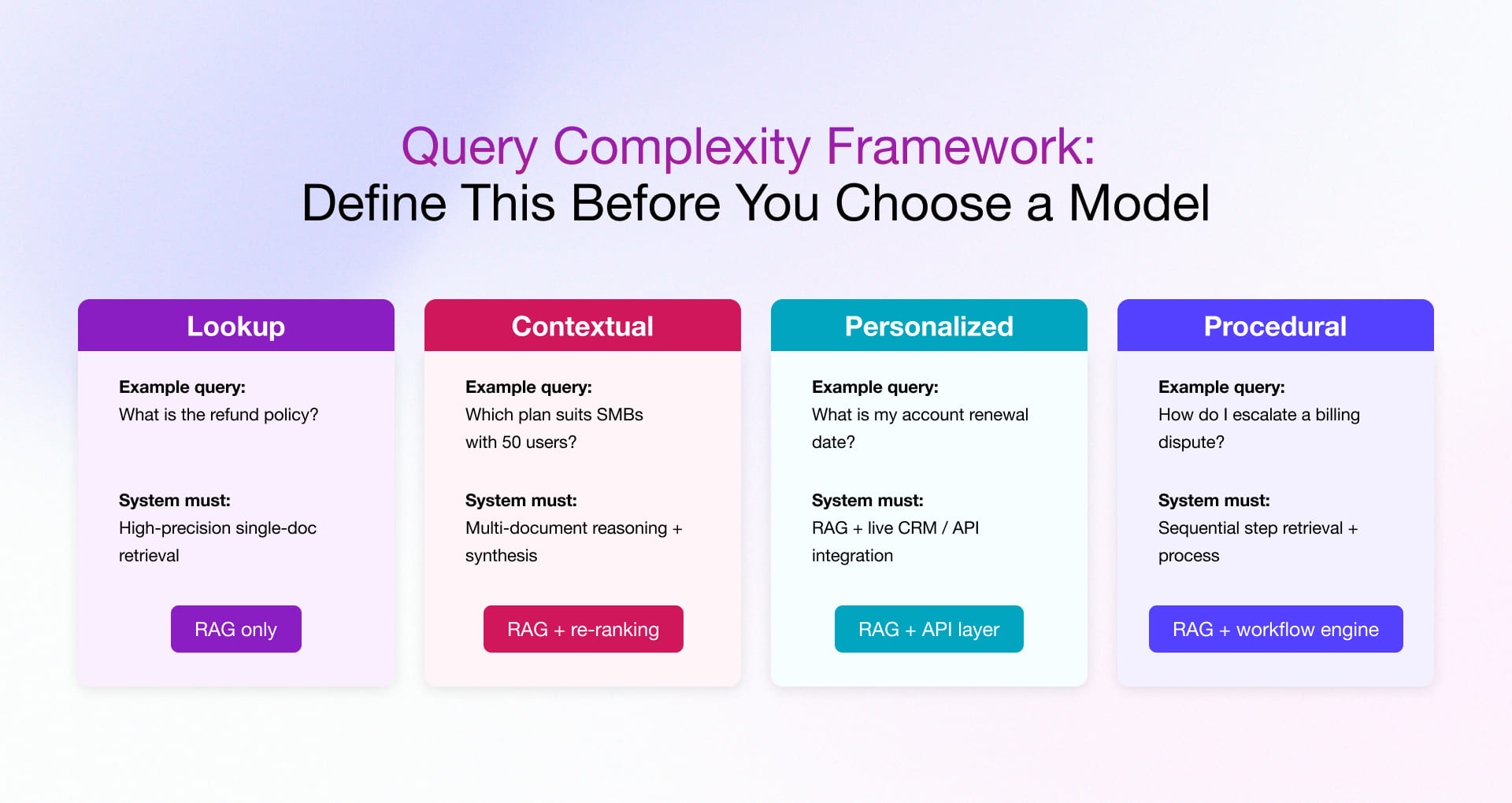

Step 1: Start with User Intent, Not the Model

Before you choose a model, vector database, or chunking strategy, you need to define who is asking, what decision they are trying to make, and how much the wrong answer will cost them. This single definition determines everything that follows.

Most enterprise chatbots face four distinct query patterns, each requiring a different retrieval and architecture approach. Mapping these before you build prevents the most expensive architectural mistake in RAG projects: tuning the entire system for the wrong signal.

What This Step Actually Defines

- RAG-only vs. RAG + live API: If your dominant query type is Personalized (account status, order history), RAG alone is insufficient. You need real-time API integration alongside retrieval.

- Semantic vs. hybrid retrieval: Lookup queries can often be served by semantic search alone. Contextual and procedural queries benefit significantly from hybrid retrieval with re-ranking.

- Latency vs. accuracy trade-off: Customer-facing chatbots need sub-3-second responses. Internal compliance tools can tolerate 5–8 seconds in exchange for higher retrieval precision.

- Fallback strategy: High-stakes query types (clinical, legal, compliance) need explicit fallback logic that says “I cannot confirm this please consult the source document.”

Step 2: Build the Right Knowledge Foundation

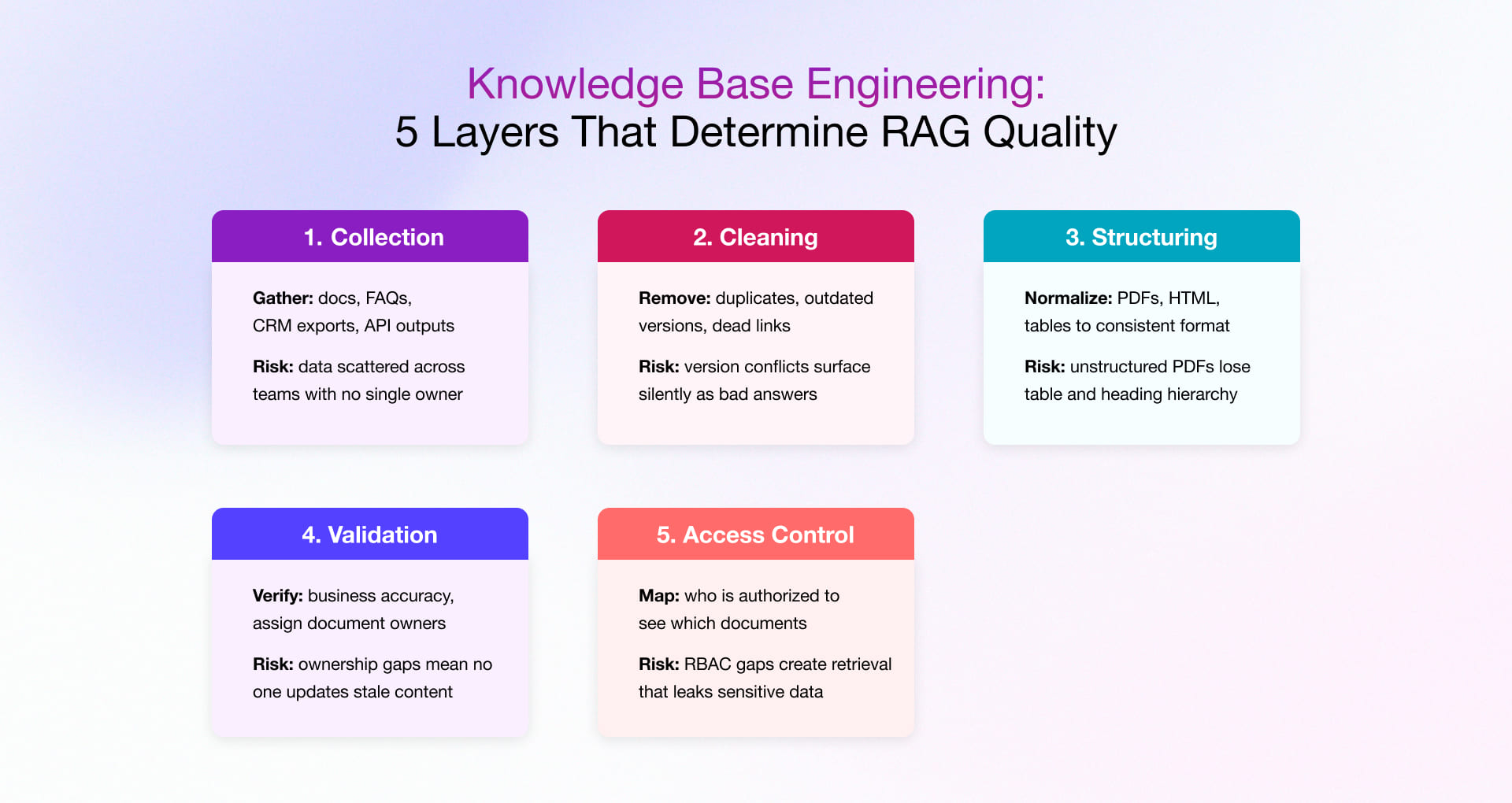

This is where the majority of RAG based Chatbot projects quietly fail not at the model layer, but at the data layer. The right mental model: treat your knowledge base like a governed data platform, not a file upload exercise. Every document that enters the index is a potential answer your chatbot will give to a real user. That requires engineering discipline at every layer.

Three Questions Every Knowledge Audit Must Answer

- Who owns this content? Every indexed document needs a named owner responsible for keeping it current. Without this, the index silently drifts out of date.

- How often does it change? Define a refresh cadence for the vector index. High-velocity content (pricing, promotions, policies) needs event-driven sync. Reference content can be batch-refreshed weekly.

- Who is authorized to see it? Map access control requirements before indexing. RBAC at the retrieval layer prevents users from receiving answers grounded in documents they should not see.

Technical Requirements

- Purpose-built parsers: PDFs and HTML require layout-aware extraction that preserves table structure and heading hierarchy. Generic text extraction destroys the structural context that makes retrieval accurate.

- Deduplication logic: Near-duplicate chunks in the index produce retrieval noise and contradictory answers. Implement semantic deduplication before indexing, not after.

- ETL/ELT pipelines: Data engineering pipelines that sync source documents to the vector store on a defined schedule.

Step 3: Prepare Content for Retrieval

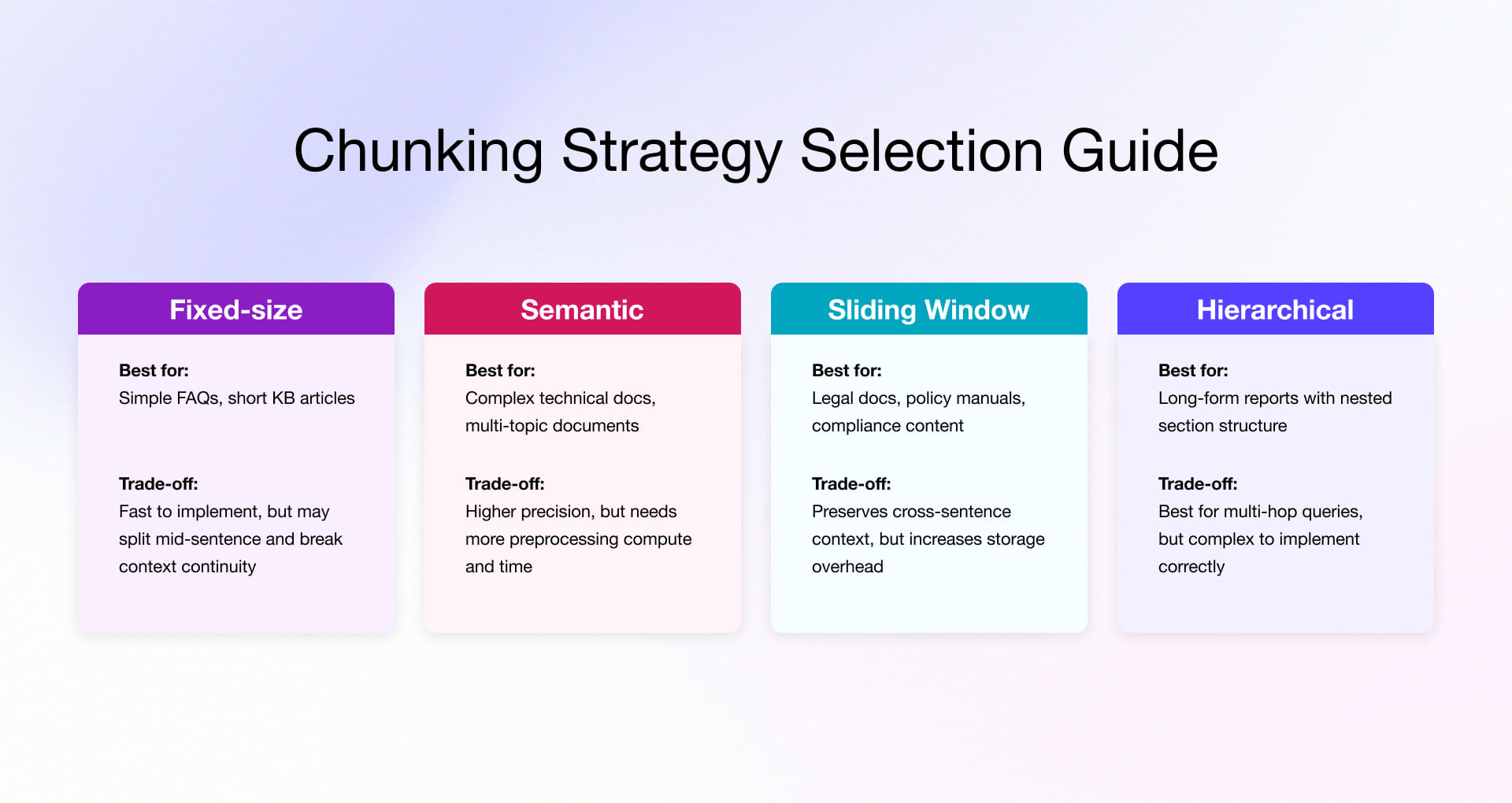

The way you split documents and convert them to vectors determines what the retrieval layer can and cannot find. Research consistently shows that most hallucination issues in production RAG systems are not model failures they are retrieval preparation failures: the right answer existed in the knowledge base, but the chunking strategy prevented it from being retrieved.

The Content-to-Retrieval Pipeline

Every document passes through six transformation stages before it can be retrieved. Each stage is an opportunity to improve precision and a point of failure if skipped:

- Raw Content: PDFs, HTML exports, database records, SharePoint files, CRM articles

- Cleaning & Normalization: Remove noise, fix encoding issues, standardize date formats, strip irrelevant boilerplate

- Chunking: Split at semantic boundaries matched to document type (see strategy guide above)

- Metadata Tagging: Tag each chunk with source, document date, category, department, and access level this is the single most underinvested step

- Embedding Generation: Use a domain-appropriate embedding model; general models underperform on highly technical or domain-specific language

- Vector Storage: Store with a parallel BM25 keyword index for hybrid retrieval not vector-only

Two Advanced Decisions Most Teams Defer Too Long

- Multi-index design: Separate vector indexes for distinct knowledge domains (e.g., HR policy vs. product documentation vs. compliance) dramatically improve retrieval precision over a single monolithic index. Plan this architecture at Step 3, not after go-live.

- Embedding refresh strategy: Define what triggers a re-embedding when source documents update. Event-driven triggers (on document save) are ideal for high-velocity content. Scheduled refresh works for stable reference content.

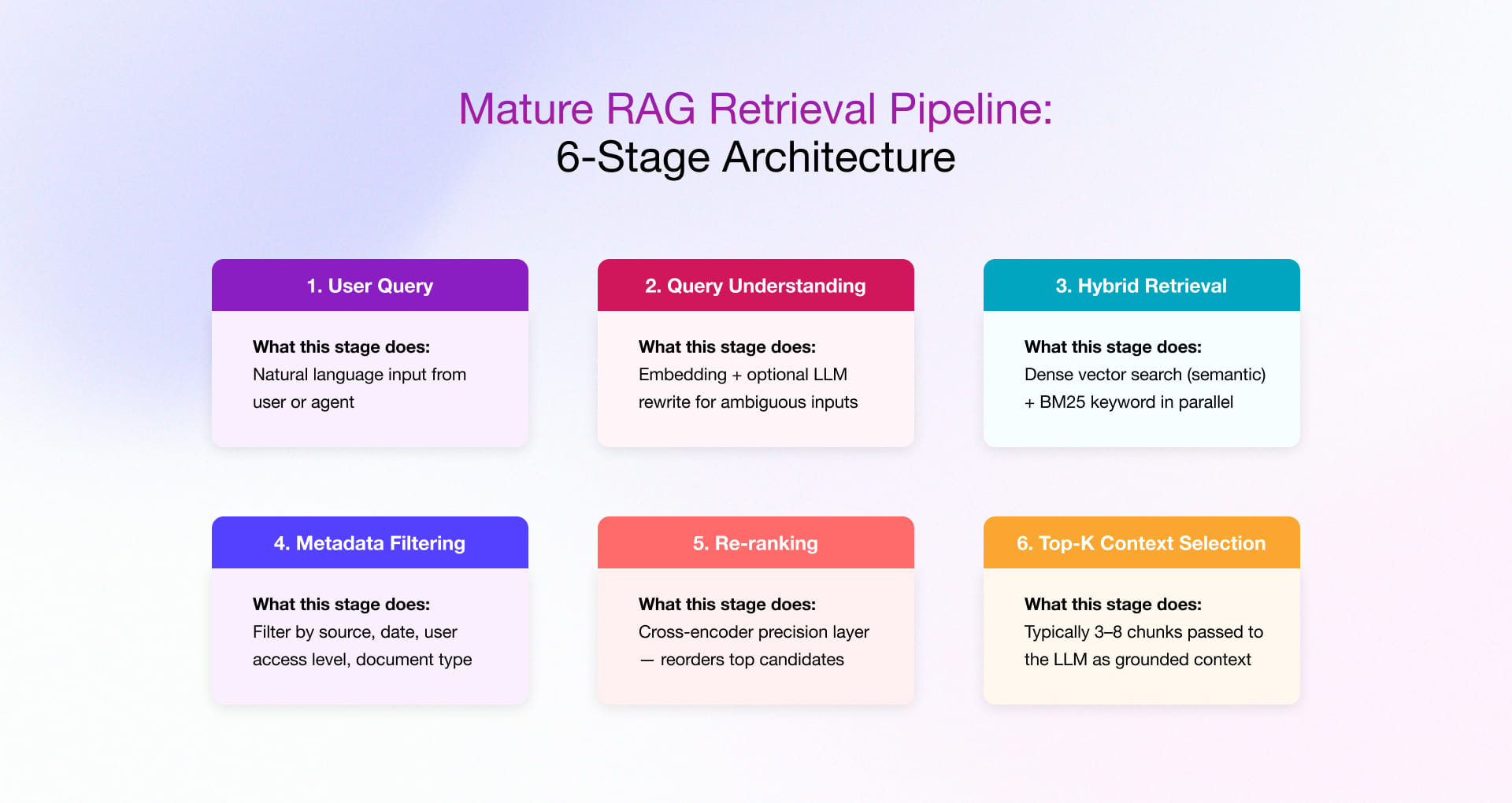

Step 4: Design Retrieval Like a Ranking Engine

If Step 3 prepares what the system knows, Step 4 determines what the model is allowed to see when a user asks a question. A basic retriever finds data. A production-grade retriever decides relevance under constraints filtering by access control, re-ranking by semantic precision, and selecting the minimal context that maximizes answer quality while minimizing noise.

Diagnosing Retrieval Failures

When your RAG system produces poor answers, the failure mode tells you exactly where to fix it. Use this diagnostic table before changing the LLM or rewriting prompts:

| Symptom in Production | Root Cause | Where to Fix |

| Irrelevant answers returned | Retrieval surfacing off-topic chunks | Improve re-ranking; tighten metadata filters |

| Correct content exists but not found | Chunking breaks context; Top-K too small | Re-chunk semantically; increase Top-K; expand coverage |

| Contradictory answers | Multiple document versions in index | Deduplication + content governance (Step 2) |

| Overly verbose, rambling responses | Context window overloaded with chunks | Reduce Top-K; raise similarity score threshold |

| Slow response latency | Re-ranker model overhead too high | Cache frequent queries; use lighter re-ranker model |

| Answers miss user intent | Ambiguous query not rewritten before embedding | Add LLM query rewriting before retrieval |

Two Advanced Retrieval Capabilities

- Query rewriting: Use the LLM to rephrase ambiguous or conversational user inputs into precise retrieval queries before embedding. This single addition improves recall by 25–40% on conversational enterprise chatbots where users rarely phrase queries as clean keyword searches.

- Multi-hop retrieval: For complex queries requiring synthesis across multiple documents, retrieve in sequence each retrieval step informed by the result of the previous. Essential for contextual and procedural query types identified in Step 1.

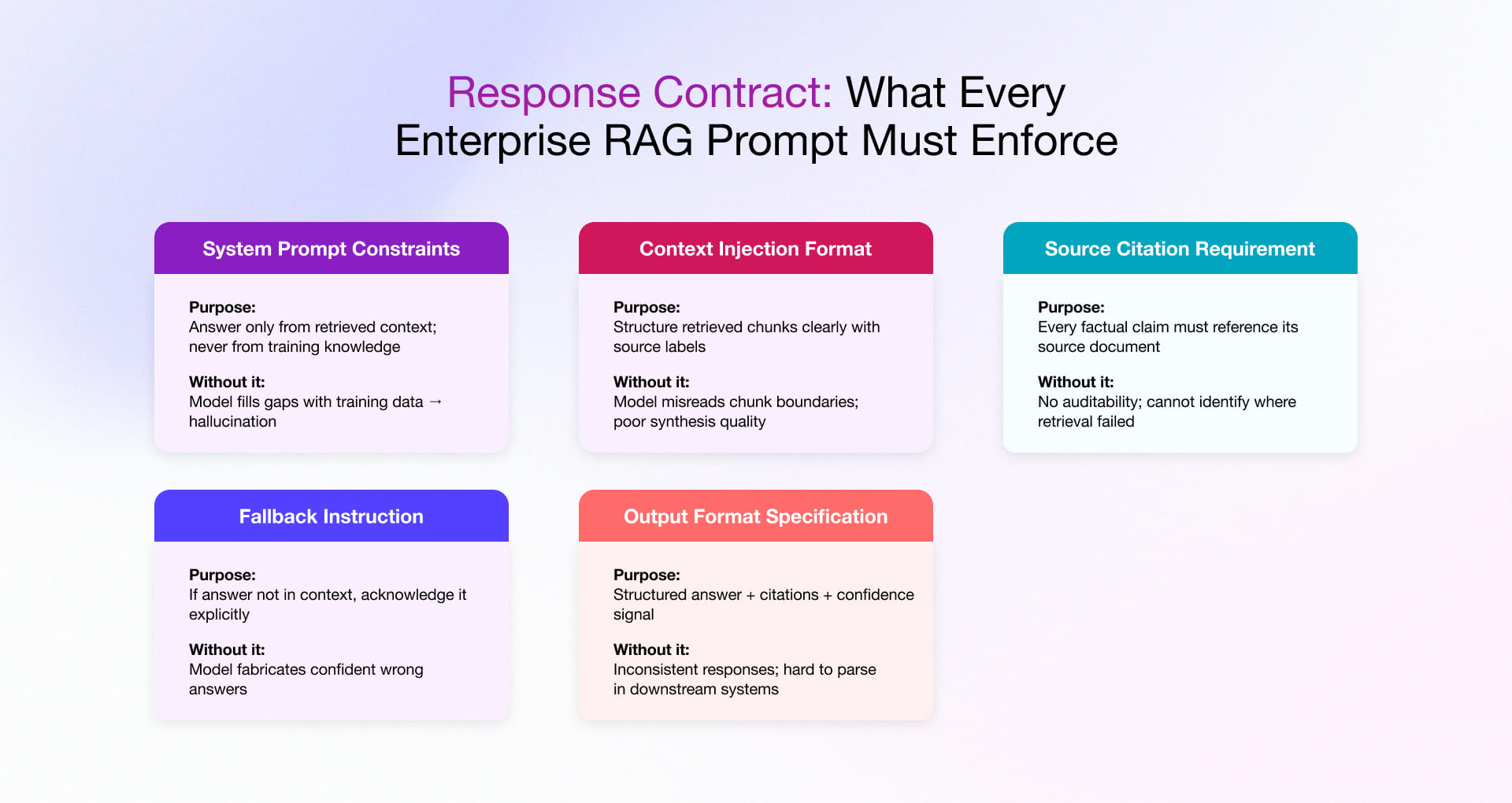

Step 5: Generate Responses That Are Grounded and Controlled

At this stage, the LLM is not reasoning from broad world knowledge it is assembling an answer from constrained, retrieved context. That constraint is the feature. The prompt architecture is what enforces it. This step is where enterprise trust in the chatbot is built or permanently lost single confident wrong answer in a compliance or clinical context can set adoption back by months.

Instead of treating prompting as an afterthought, define a response contract, a set of explicit rules the model must follow in every response, regardless of query type or retrieved context:

Control Mechanisms That Belong in Every Enterprise Deployment

- Temperature: Set between 0.0 and 0.2 for factual, compliance-sensitive, or process-oriented responses. Higher temperatures increase variety but decrease fidelity to retrieved context. The wrong trade-off for enterprise knowledge retrieval.

- Token limits: Prevent context window overload that degrades response coherence. Limiting retrieved context to the top 3–5 chunks (rather than 10+) improves response precision for most enterprise query types.

- Guardrails: Topic and content filters that prevent the model from responding to out-of-scope queries or generating responses in restricted domains (legal advice, medical diagnosis, financial recommendations).

- Structured output: Enforce JSON or defined schema outputs to make responses parseable by downstream systems ticketing integrations, dashboards, CRM update workflows.

- Fallback logic: When retrieved context does not contain a sufficient answer, the model must explicitly acknowledge this rather than fabricate. Phrase it as: “I could not find this in the current knowledge base please consult [source] directly.”

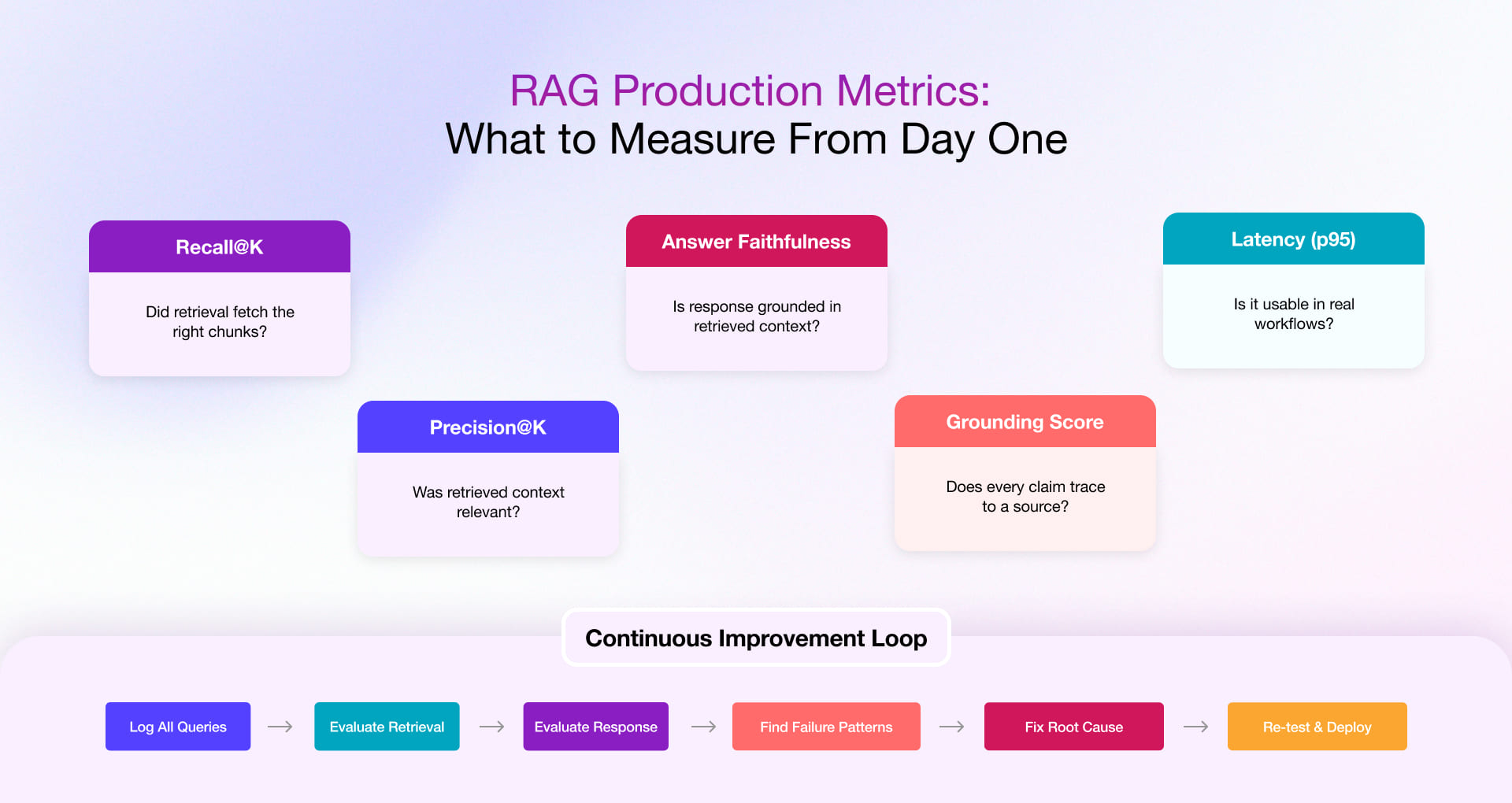

Step 6: Test, Measure, and Continuously Refine

Most teams treat testing as a one-time gate before launch. In production RAG, testing is an ongoing operational function. Your knowledge base will change. Your users’ query patterns will evolve. New document types will be added. Every one of these changes is a retrieval regression risk unless you’re measuring continuously.

The Feedback Signals That Improve Systems Fastest

- Failed query logs: Queries that returned no answer, triggered a fallback, or led to immediate user escalation are retrieval failure maps. Cluster them by query type to identify which knowledge domains have coverage gaps.

- Human feedback: Thumbs-up/thumbs-down signals from end users, weighted by query type, provide the ground truth signal that automated metrics cannot replicate. A 15% thumbs-down rate on a specific query cluster is an actionable retrieval signal.

- “No answer” tracking: When the system correctly says “I don’t know,” log the query. These are knowledge base gaps, not model failures and they’re fixable by adding the missing content to the index.

Long-Term Operational Reality

- Content changes require embedding refresh: When source documents update, the corresponding vector embeddings must be refreshed. Plan this as scheduled operations, not one-time engineering tasks.

- User behavior changes require retrieval re-tuning: As users learn how to query the system, their phrasing patterns shift. Re-evaluate retrieval quality metrics quarterly and re-tune Top-K and score thresholds accordingly.

- New knowledge domains require scoped index expansion: Add new domains incrementally. One at a time, with quality gates before production.

Our dedicated engineering team ensures quarterly RAG performance reviews, retrieval quality benchmarking, and index refresh operations as part of the standard engagement.

Why Enterprises Choose Rishabh Software to Build Their AI Chatbots with RAG capabilities

Rishabh Software brings over two decades of digital engineering experience and expertise to every AI engagement. We design and build RAG-powered AI chatbots from the ground up, with the enterprise architecture, integrations, and domain context.

Our value-driven RAG development and consulting services enable businesses to deploy retrieval-rich AI systems that modernize knowledge access and automate decision-making. We have served across industries, including FinTech, HealthTech, digital manufacturing, and many more key industries, which ensures we don’t just understand how to build these systems, but how they need to perform in real business environments.

Whether you’re evaluating your current chatbot’s readiness for RAG or building a solution from scratch, our AI services can help you move forward with confidence and stay ahead in the next wave of digital transformation.